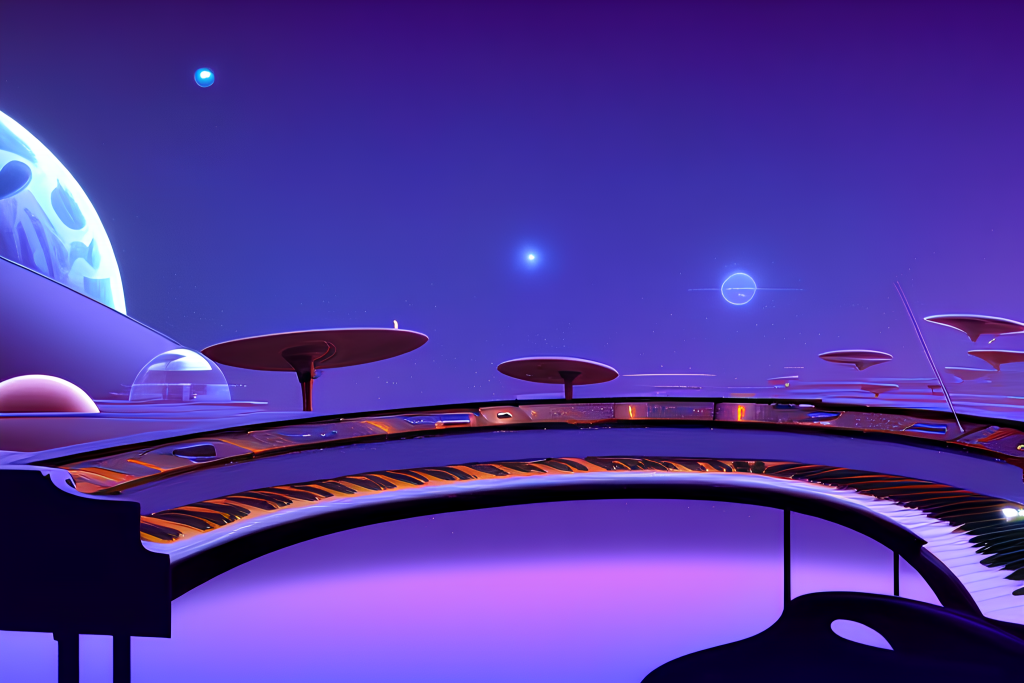

Researchers at Google have developed and released the details of an AI system that can turn text descriptions into music.

The algorithm, called MusicLM, was trained using the MuLan algorithm, which generates text descriptions of music, and a public dataset called MusicCap created by professional musicians.

The researchers evaluated the music generated by MusicLM based on audio quality and how well it followed the text descriptions, and also found that the algorithm is not perfect and suffers from the same biases as the data used to train it.

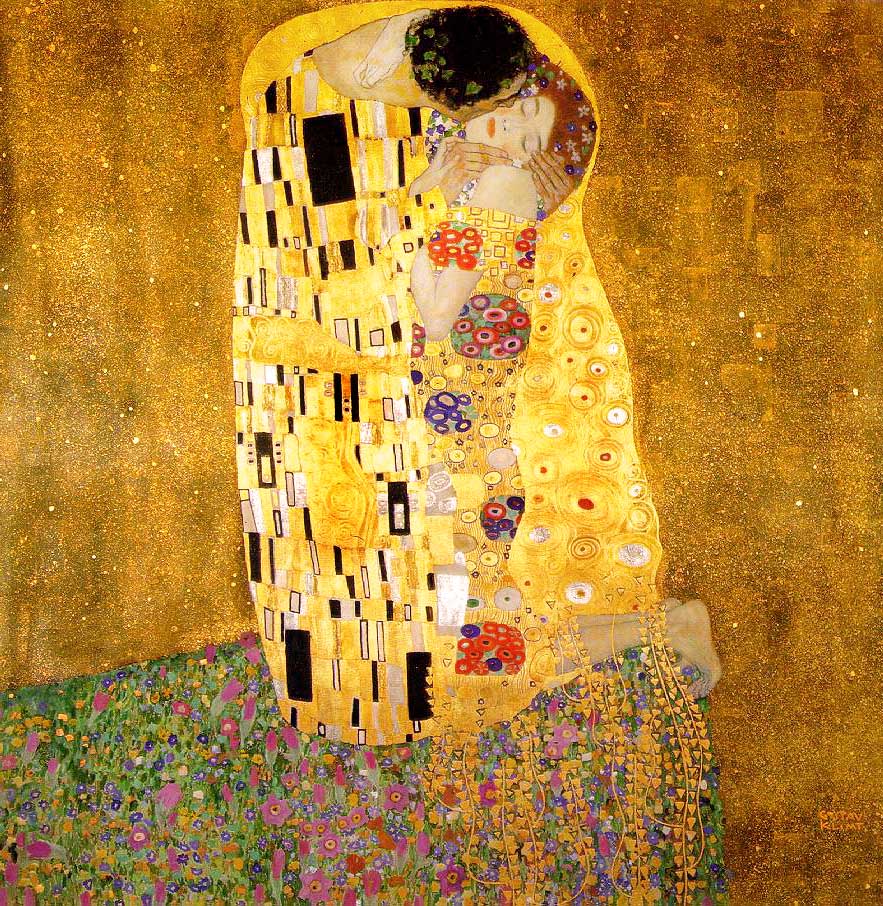

The team has released examples of music generated from text descriptions of famous paintings, including The Persistence of Memory by Salvador Dali, The Scream by Edvard Munch, and The Starry Night by Vincent van Gogh.

Below is the team’s clip that was generated by Gustav Klimt’s The Kiss:

For more examples and background on the project, visit the the project’s paper here.