If you’ve used ChatGPT for longer projects, you’ve probably figured out that “please give me 10,000 words on the health impacts of deep breathing” might output at 1200, 3000 or 4203 words – or somewhere in between.

This issue arises from the fact that ChatGPT operates on a token system, so “word count” is kind of irrelevant. After all, the number of words per token can vary depending on the complexity of the word, making it difficult to predict the length of the output in terms of word count, even if you add something like “exactly” or “precisely” to your prompt, you won’t likely get proper results.

To help you better manage the length of your output, you need to think a bit mathematically then. This is where token count comes in handy when you need to regulate the length of your response.

How To Use Tokens In ChatGPT To Achieve Better Word Counts

Tokens are essentially fragments of words that OpenAI/ChatGPT uses to measure the length of a piece of text. However, tokens are not precisely segmented at the beginning or end of words, as they can include extra spaces and even parts of words. Here are a few general guidelines to help you understand how tokens relate to length in written content.

- 1 token ~= 4 chars in English

- 1 token ~= ¾ words

- 100 tokens ~= 75 words

Or

- 1-2 sentence ~= 30 tokens

- 1 paragraph ~= 100 tokens

- 1,500 words ~= 2048 tokens

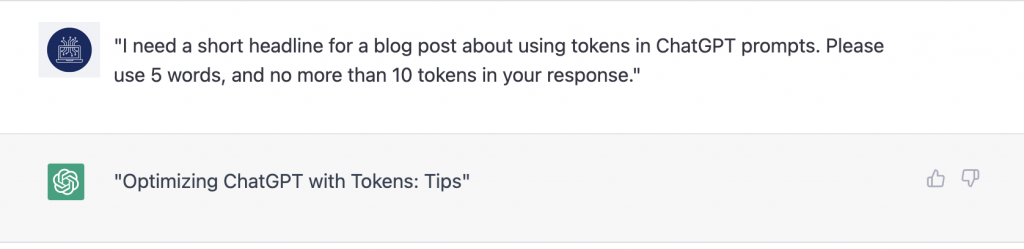

For example, let’s say you needed a 5 word headline and ChatGPT keeps generating headlines that are either too long or too short, you could try adding your token length to the prompt.

For example:

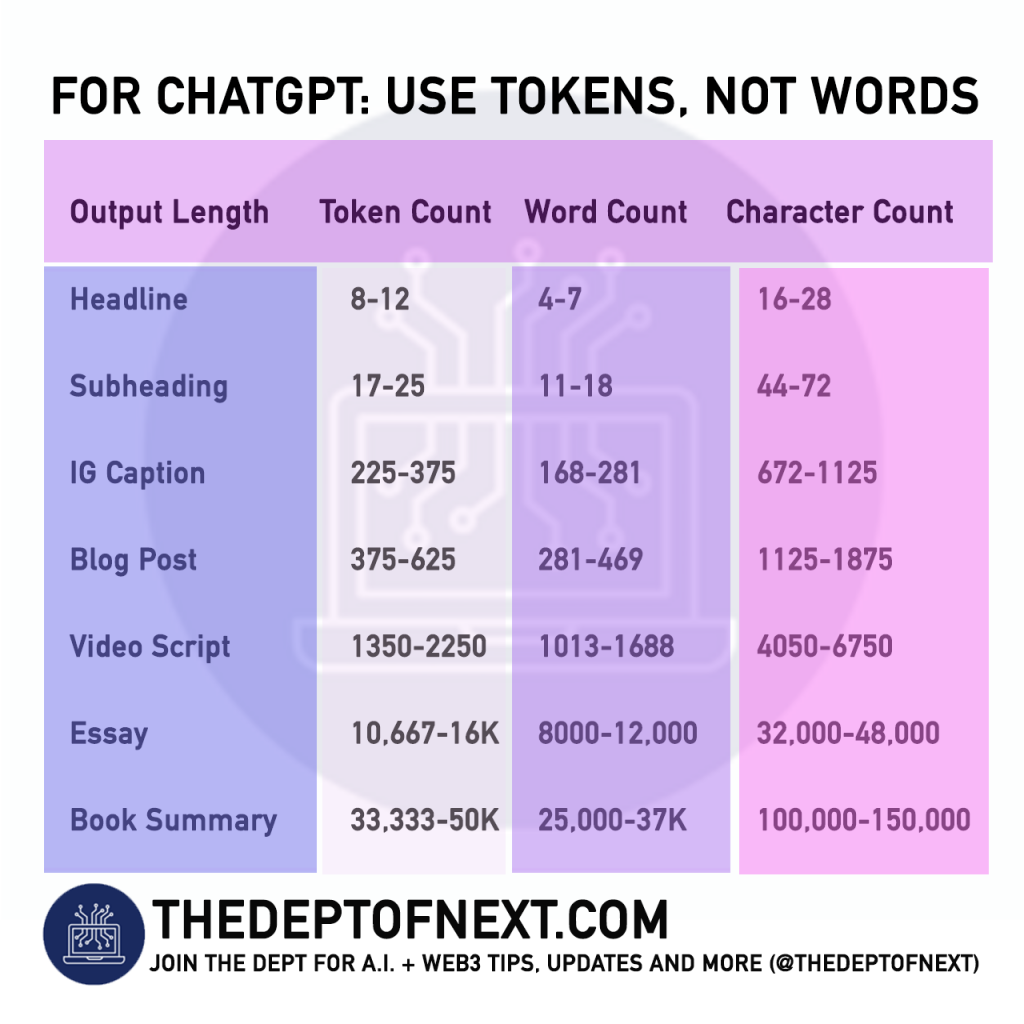

Here’s a general chart for some common lengths:

| Output Length | Token Count | Word Count | Character Count |

|---|---|---|---|

| Headline | 8-12 | 4-7 | 16-28 |

| Subheading | 17-25 | 11-18 | 44-72 |

| Instagram Caption | 225-375 | 168-281 | 672-1125 |

| Blog Post | 375-625 | 281-469 | 1125-1875 |

| 7-Minute YouTube Script | 1350-2250 | 1013-1688 | 4050-6750 |

| Essay | 10,667-16,000 | 8000-12,000 | 32,000-48,000 |

| Long Book Summary | 33,333-50,000 | 25,000-37,500 | 100,000-150,000 |

Everything is a degree of approximation, but you’ll generally find that tokens instead of word counts will give you more control on the outputs, and more accurate results.

You may also enjoy:

• Web3 Glossary

• From ELIZA to ChatGPT: History of A.I.

• Use ChatGPT for Instagram captions

How To Use Tokens In ChatGPT To Achieve Better Word Counts: share this graphic below with a ChatGPT user in your circles:

Pingback: Apple and Tesla re-evaluating AI development due to success of ChatGPT According To New Report – THE DEPT OF NEXT